Logs From The Server

One of the things I’ve missed, having moved my blog off of Blogger, is the metrics. I don’t use the metrics for much, but there’s a nonzero serotonin hit to knowing that my content is read by someone. It’d be nice to be able to restore at least that piece of the Blogger feature-set.

Fortunately, I have access logs and a log analyzer.

I’ve settled on goaccess for my log analysis; it’s pretty straightforward, takes HTTP access logs as input, and presents the data visually (including on the command line). It’s installable on my local machine via the package manager (sudo apt-get install goaccess), so no problems there.

The steps are pretty straightforward:

- Get the logs

- Dump them into goaccess

The script to do that is short and sweet:

#!/bin/bash

SERVER=fixermark.com

LOGPATH=logs/personal-blog.fixermark.com/http

DESTINATION=logfiles

mkdir -p "$DESTINATION"

scp $SERVER:$LOGPATH/access.log* $DESTINATION

pushd $DESTINATION

# this is redundant because it's a symlink on the server to the most recent logfile

rm access.log.0

# gunzip will confirm replacing files

yes | gunzip -f *.gz

popd

goaccess $DESTINATION/access.log*

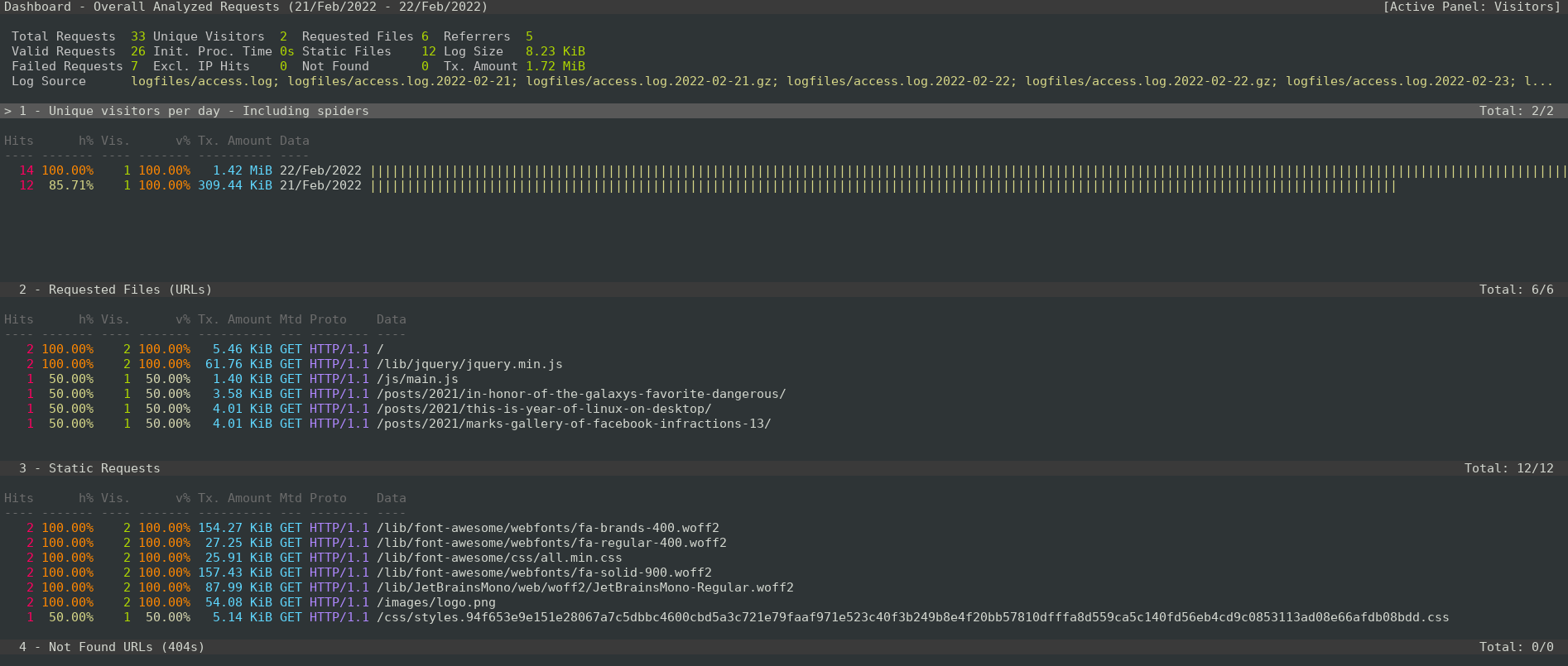

I run that, and I’m presented with a nice terminal interface for viewing the logs.

This is a good start!

Filtering

goaccess doesn’t support any filtering directly, but access logs are relatively simple to filter with command-line tools, and goaccess does support receiving its logs from the command line. Here’s a simple script to drop the logs related to various static content pieces:

cat $DESTINATION/access.log* | \

grep -v /lib/ | \

grep -v /css

grep -v /images/ | \

grep -v /js/ | \

goaccess --log-format=COMBINED -

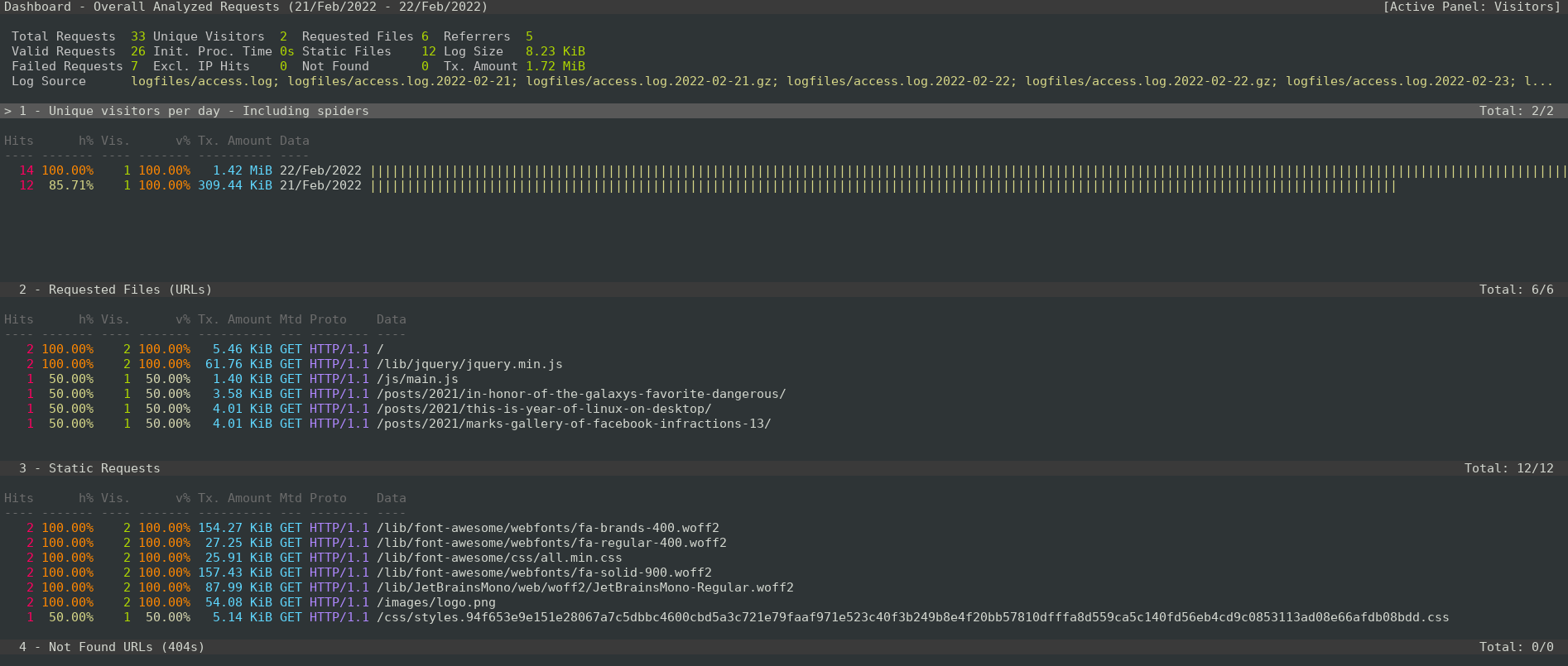

Adding this to the fetch script, the logs are now honed in on just posts.

To do next

Only a couple of things I’d like to improve in this flow:

- scrubbing logs—after 21 days, I’d like to substitute the IP addresses with 0.0.0.0 to increase user anonymity.

- Run these server-side (or have

goaccesspull them remotely, if possible) so logs aren’t living on more machines than strictly necessary.

Comments